Why data-indexing is a problem for AI-powered search

Most AI-powered search or knowledge retrieval tools use data-indexing. It’s chosen because it’s supposed to provide quick and efficient retrieval of relevant data.

Typically, it works by copying data to a vector database, which uses algorithms to index and store numerical representations of the data — so it can be retrieved more efficiently.

Although data-indexing is the most common approach it has a number of drawbacks, particularly for enterprise deployment, with reduced security, poor precision, and complex data management.

1. Reduced data-security

One of the most significant problems of data-indexing for enterprises is that it risks compromising the security of company data.

The process of indexing effectively creates a copy of your data, usually on a third-party server. This may make searches quicker in some cases, but it increases the potential attack surface for hackers and massively increases the potential damage of a data breach.

In addition, rather than having information stored on multiple distributed applications, indexes bring all that data together in one place. Ordinarily, if a specific app has a data-security problem, the damage is limited to the information stored within that app. But if the data-index provider is breached, the hackers could access to a much larger range of data, including everything you have indexed.

One might think that vector embeddings don't pose a major security risk, as the numerical representations don't mean anything on their own. However, a recent study from researchers at Cornell University found that 92% of the meaning can be recovered exactly. The conclusion was that "embeddings should be treated with the same precautions as raw data".

2. Poor precision

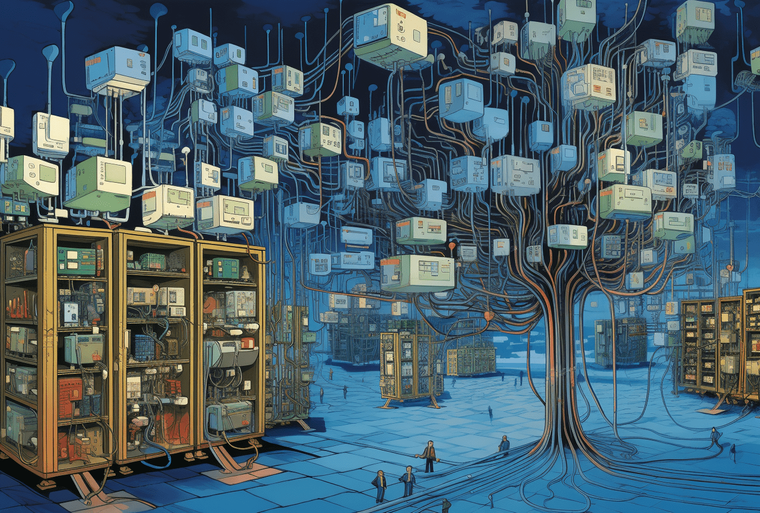

The second problem with data-indexing is precision and accuracy, which degrades very significantly for larger datasets. This is because data-indexes use a vector database to index information.

Vector databases work by transforming the source information into a vectorized numerical form, where it is then stored in high-dimensional space. Nearest-neighbor algorithms are used to resolve searches, identifying the vector in the dataset with the smallest distance to the query vector and marking it as the nearest neighbor.

However, as more and more data is added, the volume of the space increases exponentially, and the data becomes increasingly sparse. This sparsity means that the distance between many data points converges in relative terms, making it difficult to distinguish between a genuine nearest neighbor and a non-relevant data point.

The practical consequence of this loss of precision is that the prevalence of false positives will increase. This means the AI generated answer may be grounded in real information from the business, but the lack of precision means it’s using the wrong information to generate the answer.

This is different to the problem of hallucination where information in a large language model’s (LLM) training data is used to resolve queries, generating completely fabricated responses.

3. Complex data management

The third problem with data-indexing is the complex data management that comes with it. Enterprise companies generate large volumes of data from hundreds of software applications. This adds significant complexity (and cost) to the data management requirements to enable data-indexing, not least because the index needs to be kept constantly up to date if it is going to be relied upon.

While the solution provider will take some of the load, there is still a large burden of responsibility on the customer to make this possible. This might include:

-

Data mapping - Creating accurate mappings from diverse data sources to guide the indexing process requires a deep understanding of the data and its structure.

-

Managing duplications - Handling duplicate information across different systems is critical to maintain the integrity and usefulness of the indexed data.

-

Monitoring data pipelines - Monitoring the data pipelines for errors, latency, or other issues is crucial for maintaining the reliability of the system.

-

Data cleaning - Data may need to be cleaned or transformed to ensure it’s consistent and high-quality, which is fundamental for the effectiveness of the solution.

Making this happen across an enterprise sized dataset that’s constantly expanding and shifting is no easy task. For this reason, it’s entirely possible that you’ll need a dedicated team whose job is to manage internal data systems, so that the indexing works as it should. This will add significant overheads to the real cost of the solution or take up valuable time that could be better spent elsewhere.

The alternative

The good news is that there are much better alternatives. Qatalog’s ActionQuery AI engine uses a combination of federated search (using real-time APIs) and retrieval augmented generation (RAG). This approach has a number of advantages:

-

Secure - It’s highly secure as there’s no data transfer or duplication needed, as the relevant information is pulled directly from the source in real time and discarded after use.

-

Accurate - Even with large datasets, it delivers consistently accurate query resolutions, making it much more scalable.

-

Easy to manage - The data management is much less onerous, as there’s no data-index to maintain and, therefore, no need for constant updates from hundreds of tools.

Request a demo to learn more or download the ActionQuery whitepaper.